I’ve always thought of servers as being like babies. They aren’t good at communicating what’s wrong, they wake you up during the night, and it’s hard to think of the perfect name for them.

Four months ago, we welcomed a new baby boy into our family. He’s been such a joy to have around. I couldn’t imagine a world without him.

As my wife and I have cared for our son, we’ve noticed he’s immune to some of the tried and true tricks that worked for our other kids. We’ve had to relearn some things to make sure he’s happy. I couldn’t help myself but compare his routine to the servers I manage on a daily basis.

Similar to infants, you learn what works for each individual server as you watch how it reacts to different situations and respond when it cries out.

The necessary evil. Being on call

Being on call means you need to be available 24/7 to handle anything that goes wrong, even if it’s in the middle of the night. It’s by far the worst part of the job, especially when things aren’t running smoothly.

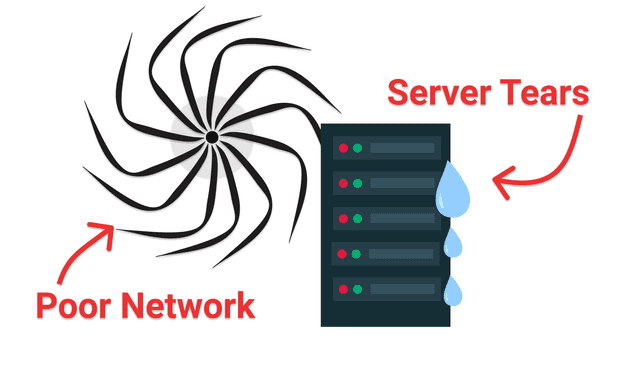

Parents are on call for babies that cry during the night. I’m on call for servers that bawl over poor network conditions.

The on call shift is a necessary evil for system admins, developers, and often support staff. I’ve always held the title of developer, and I’ve been on call the past 5 to 6 years. I find it can be stressful, no matter how much I’ve prepared.

Software has changed the world and made everyone more reliant than ever before with having 24/7 access - at least in theory anyways! A common side effect of this new reality are teams that need to answer calls as quickly as possible when something goes wrong (or even if not).

Count yourself lucky if you haven’t been required to be on call. I predict that it’ll be more and more common to have developers work closely with sysadmins and support staff to resolve outages quickly. So if it hasn’t happened yet, it soon will.

Dealing with the pressure

When my wife and I had our first child we had no idea what to expect. We had always listened to how others described the experience, but nothing quite prepared us for having our own child. We were completely overwhelmed and didn’t know what to do. Luckily we had help along the way.

I had a similar feeling of being overwhelmed when I was put onto my first on call shift. I had graduated about 10 months prior and had switched jobs from a mobile engineer to a backend position. I had almost no experience with servers.

After a month on the job, my manager decided to put the new hires on call. I got lumped into the batch despite being so new. Thoughts of inadequacy raced through my mind.

I can’t be on call, I just barely started

Gah.. I knew taking this job was a mistake

I’m just going to let the whole company burn down… That’ll teach ‘em.

I ended up surviving, but I wish I had known some things those first few months. Now that I’ve managed hundreds of servers I know a thing or two. I’ve kept servers running in several environments ranging from doing 100% everything manually all the way to fully managed solutions such as kubernetes (similar to a daycare if you have a child).

Learn by doing

If there’s one thing I’ve found to be helpful it would be this - dive in and learn by doing things. I don’t mean by prancing around reading the filenames of the source code. I mean by launching your own server. Changing variables in a staging environment. Volunteering to do the next deployment.

As I’ve watched my servers “grow up”, I’ve found some similarities in ways to prepare to manage on call shifts. Maybe you’d call me an expert… I actually think of it as being slowly roasted by several fires over the past decade (fires are the common term for when servers are down and customers are unhappy).

Here’s my tips for preparing yourself for your next on call shift.

Tips for being on call

1. Get access to servers and accounts

If you’re going to be on call for when a server goes down, at the very least, you’ll need access to it to see what went wrong.

While it’s always best to be proactive, you may also consider milking this excuse to delay being put on call as long as possible. Usually right after an outage (even better if it’s during), when your boss is frustrated more developers aren’t on call, is a perfect time to mention you don’t have all the right permissions to help. You might get an extra month or two before the inevitable happens.

After you’ve ensured you can remotely connect to servers, log into the various metric dashboards, and have permissions to the infrastructure accounts (AWS, GCP, Azure, etc), you’re ready for your first outage… right?

Well, not quite, there’s a few more things to consider. Like that inner voice that keeps reminding you that you have absolutely no idea what you’re doing and are terrified of being put on call. No worries, the next best thing to do is learn escalation policies.

2. Learn escalation policies

Aside from getting your hands dirty, learning about escalation policies is my next highest recommendation for calming your nerves.

Make sure you know who to contact and how to reach them when you can’t resolve an issue. They’re often called the secondary, or the person you fall back on. Hopefully your job doesn’t expect you to put out fires on your own.

Anything can happen so make sure you know how to pull more people in the loop.

3. Check your calendar

Be aware of when your shift is coming up. Servers tend to get upset at the most inconvenient time.

There’s nothing like being paged when you’ve just arrived at your in-laws for dinner and you don’t have the tools with you to resolve it. You’ll end up having to leave, drive to get your laptop from home, or stop in the office. It makes being on call a lot more tedious. Maybe you actually wanted an excuse to leave your in-laws, in that case, carry-on, but for the rest of us make sure you’re not caught unprepared.

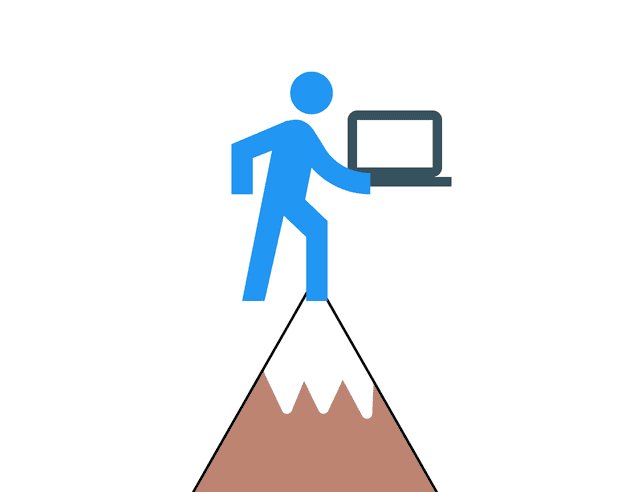

4. Bring a laptop everywhere

Instead of making emergency trips home to grab your laptop, simply plan on hauling it everywhere. I’ve found that it’s really not that big of a hassle to leave the laptop in the car. Even if I’m just stopping at a park for a walk with the kids, I like to have it easily accessible.

A good rule of thumb is to be with-in 10 minutes of your laptop during your shift. If you’re driving, please escalate to the next person. There’s no sense in losing your life to respond to an incident. In fact, the company will be worse off because they’ll have to hire someone else to replace you. It’s better to wait until you can safely pull over and help out.

You can even take it a step further and have an “on call” laptop or chromebook stored in each of your vehicles. The chromebook will ensure you have a full keyboard and be able to connect remotely to your servers. This strategy makes it so you don’t have to check your schedule as often or remember to bring your laptop.

5. Double check notification settings

You might get pages when you’re sleeping, so make sure your settings on your phone are set up so that you’ll be woken up.

I had times when I got interrupted in the middle of a good dream. When this happens, I send an invoice directly to my boss requesting he pay the full cost of my lost dream.

On a more serious note, pagerduty and other on call apps let you send a quick test message. It’s good to check that it can override your Do Not Disturb settings too. You might need to add pagerduty or Slack as a favorite contact or a trusted friend.

6. Follow up on pages

Do more than simply acknowledge outages. Respond with a quick comment with what you’re thinking of doing.

Even if it’s a false positive. Say something. I like typing the phrase, “Flappy server”. Then submit a fix or make a ticket so it doesn’t page the next person.

At one company I worked for, we had a policy of making outage avoidance opportunities or OAOs. Every outage, we’d make follow up tickets that were broken down into simple tasks of about an hour or less worth of work.

Here are some examples:

- Set a reminder to renew the ssl certificate

- Install log rotate so the server stops filling up it’s disk

- Upgrade the node pool to autoscale so pods can be scheduled.

We’d schedule 1 or 2 a week. Since most of our outages were recurrences of a previous outage, slowly, over time, the whole system’s uptime improved.

7. Be proactive with communication

Don’t just assume someone else will do it! If there’s something happening or coming up that could affect anyone at the company (like upcoming maintenance), tell them beforehand so they know what might happen during their shifts too! This includes keeping everyone updated about any potential risks as well.

A good rule of thumb is to schedule overrides when you think there is potential for a deployment to go wrong.

They grow up so fast

While I wish I could tell you how to get your servers to communicate what’s wrong better and to get them to sleep train, it turns out parenthood tricks just don’t work the same for computer servers. But hopefully my tips help you to be better prepared for ‘server’hood, especially when you get the dreaded job of being on call. Now for a way to figure out how to give servers (and babies) a good name. And at least we don’t have to potty train them!